Retrieval Augmented Generation (RAG) helps LLM-based apps operate with private, up-to-date and correct information. The pace of change in the RAG domain is so quick that new approaches appear almost weekly.

In the simplest case, a RAG is implemented using external documents, embeddings, and a vector database. External documents are split into chunks. These chunks are used to generate embeddings. Each embedding is stored in the vector database. During user requests, we retrieve chunks using the vector database. Retrieved chunks form a context for grounding an LLM. Grounded request to LLM improves the results.

Retrieving only chunks of documents for grounding LLM limits understanding of the whole document. This is what RAPTOR is going to fix. Recursive Abstractive Processing for Tree-Organized Retrieval (RAPTOR) makes clusters from chunks. For each cluster, a text summary is generated. In the next step, a tree data structure is created, where chunks are siblings, and summaries are parent nodes. According to the results, GPT-4 plus RAPTOR beats all current approaches.

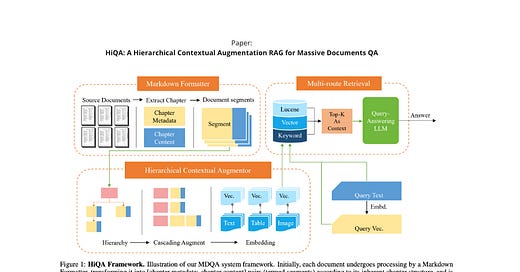

HiQA (Hierarchical Contextual Augmentation RAG) proposes a slightly different approach. It consists of three big blocks: Markdown Formatter (MF), Hierarchical Contextual Augmentor (HCA), and Multi-Route Retriever (MRR). First of all, the document is converted into Markdown format. The resulting Markdown is enriched with metadata. In the next step, HCA extracts structured information from Markdown. Texts, tables and images are processed differently, and embeddings are generated for each type of content. The last step is to choose documents for the grounding of LLM. In HiQA, Multi-Route Retriever is used. This module utilises Vector similarity matching, Elastic search engine and Keyword matching based on the Critical Named Entity Detection (CNED) method.

RAPTOR and HiQA are different in the following areas:

Fixed-size chunks vs splitting based on natural chapters within the document

Text summarization vs metadata and augmentation based on content types

Vector Similarity search vs a ranking based on three different approaches

A combination of approaches shows even better results in RAG systems. Corrective RAG (CRAG) can be used as a plugin for the two methods above. The idea is to evaluate each chunk/document we plan to use for the grounding of LLM. Suppose we retrieve five chunks at the end of the RAG pipeline. According to CRAG, we should calculate a confidence score for these chunks by classifying each chunk as Correct, Ambiguous or Incorrect. Correct chunks can be used for grounding with a bit of refinement. In case of Incorrect scoring, we can execute a web search. In the Ambiguous case, knowledge refinement and web search are used together.

Some thoughts:

There is a tendency to use LLMs inside the RAG pipeline - for summarization, augmentation or scoring. Some approaches, such as Agentic RAG, completely rely on the reasoning capabilities of LLMs

Ranking is a huge problem. Basically, we need to encompass parts of search engines inside the RAG pipeline. It's quite easy to do with commercial solutions such as - https://cohere.com/rerank

Size of chunks, Number of Chunks, Embeddings model and Metadata usage are just a few questions one needs to decide before building an RAG

Resources:

https://arxiv.org/abs/2401.18059 - RAPTOR: RECURSIVE ABSTRACTIVE PROCESSING FOR TREE-ORGANIZED RETRIEVAL

https://arxiv.org/abs/2401.15884 - Corrective Retrieval Augmented Generation

https://arxiv.org/abs/2402.01767 - HiQA: A Hierarchical Contextual Augmentation RAG for Massive Documents QA

https://blog.llamaindex.ai/agentic-rag-with-llamaindex-2721b8a49ff6 - Agentic RAG With LlamaIndex

https://cohere.com/rerank - Cohere for reranking