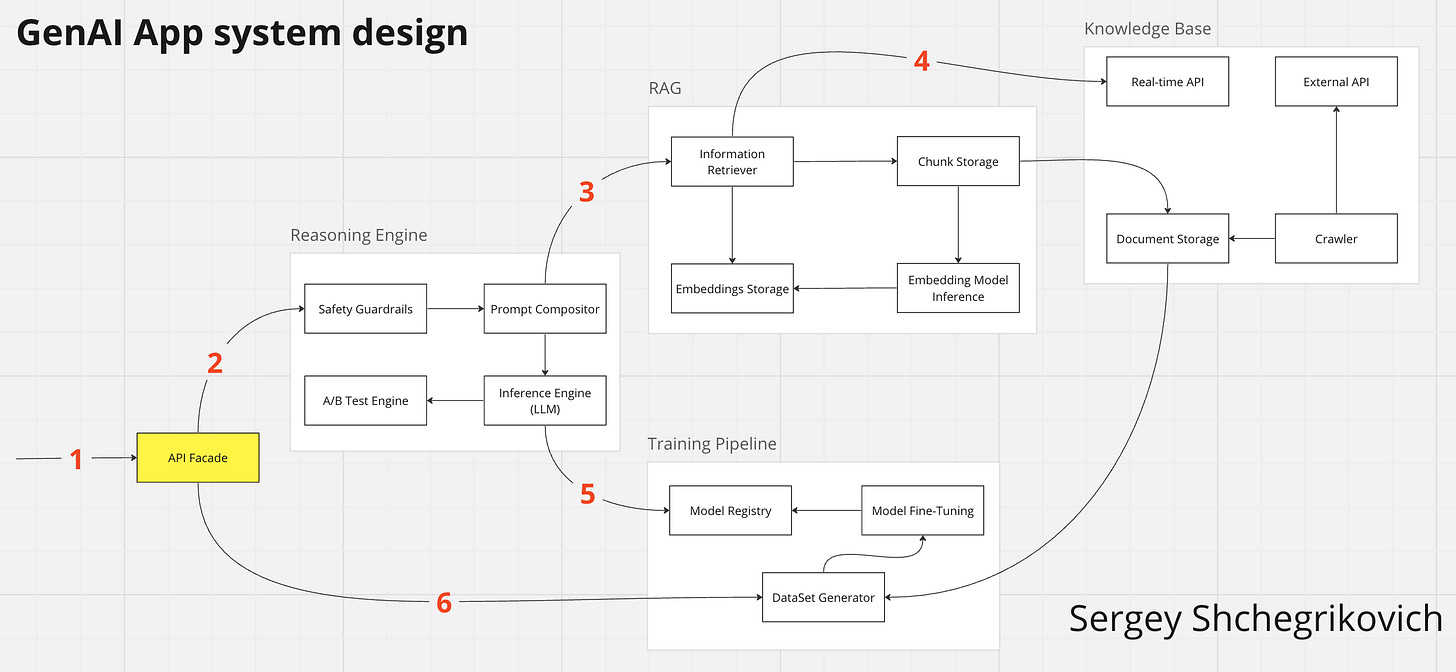

Architecture of GenAI app

The development of a GenAI app starts with experiments and prototypes. At some point, we need to switch to the big picture and, on a high level, understand what the GenAI app consists of.

I've attached an architecture diagram of one of many possible views of a GenAI app. Knowledge Base provides additional information for the whole system. The idea of Knowledge Base is to store your customer's private data and other information required during the execution of a query from a customer.

For fast and accurate information retrieval, we add RAG. Retrieval-augmented generation enriches customers' queries with up-to-date and more precise information—the information which couldn't be available to the LLM during training.

Once we've got the customer's request and additional information, we can ask LLM to generate an answer in Reasoning Engine. This is where the prompt magic happens. In Reasoning Engine, we make prompt testing, execution and validation. Safety Guardrails might be the most complex and vital part of the process.

So when the customer makes a query, we send it to Reasoning Engine, which asks RAG for additional content. The actual LLM to use is provided by Model Registry, which is part of the Training Pipeline. To increase the quality of the application, we use DataSet Generator, which knows about previous successful customer's queries and can turn Knowledge Base into prompts for fine-tuning.

This scheme has tons of improvements, but I'll focus on fine-tuning. There is a whole component for this - Training Pipeline. In addition, we can fine-tune the Embedding Model and Information Retriever in RAG. The complexity adds an ability to store text, audio, and visual content in the Knowledge Base.

This high-level view doesn't cover logging, monitoring, privacy and encryption topics. Tooling for visualizing the system's data flow might be as crucial as evaluation metrics for each component.

If you want to read more about the architecture of LLM applications, then read this:

YouTube - Papers Club "Engineering an End-to-End

https://a16z.com/emerging-architectures-for-llm-applications/ - Emerging Architectures for LLM Applications

https://github.com/run-llama/ai-engineer-workshop/blob/main/presentation.pdf - Building, Evaluating, and Optimizing your RAG App for Production